The Great AI Misallocation

Why Your Tool Strategy Is Failing

Which counts most in your team:

- the frequency of decisions made, or the clarity of each of them?

- the quantity of deliverables over time, or the quality of the deliverable?

- the number of actions taken, or the number of related actions they prevent?

One answer invites complexity, the other simplicity.

While there is no single right answer for all teams all the time, some answers are more right than others for your team today.

In this three-article series, we’ll explore how to find the most effective answers to questions like these that scale into the Age of AI. Because before AI, it was okay to be opaque. Work moved at merely human speeds. Now, as parts of our work are beginning to move at inhuman speeds, anything less than clear and machine-interpretable becomes at best a bottleneck, and at worst a trainwreck in the making.

Masquerading As Transformation

Leadership teams are reviewing project dashboards that present a compelling picture of activity, no matter the industry. Vendor roadshows are full, licenses for generative AI assistants have been procured, and internal pilot programs are reporting high levels of initial engagement.

This looks like transformation, as promised. It allows organizations to report progress to their boards, receiving smiles of acceptance. However, a deeper audit of the fundamental business metrics underneath the field of green indicators reveals a starkly different reality, where decision cycles remain the same, cost structures break instead of bend, and the flow of organizational authority unchanged. The accumulated success stories and announcements mask a persistent stasis where significant capital expenditure yields motion without perceptible business impact. This disconnect stems from a fundamental categorization error regarding the technology itself, as most enterprise investment strategies treat Large Language Models (LLMs) and generative artificial intelligence agents (AI agents) as replacement technologies akin to more familiar robotic process automations. Leaders deploy these tools expecting them to simply execute tasks, making efficiency gains simple and easy. Yet this approach ignores the inherent nature of the current technological wave that is AI. Unlike deterministic automation that follows rigid rules, generative systems function probabilistically and require constant interaction, context, and oversight to deliver value. They don’t follow rules, they predict patterns.

Not One For One

Empirical evidence now exposes the structural flaw in this replacement-focused strategy. A recent Endeavor Intelligence analysis of the data of the TechWolf Skills Intelligence Index reveals an augmentation-to-automation ratio of 1.9. This indicates that for every task a system can fully replace, there are nearly two that it can only augment.

63.2% of enterprise work remains fundamentally human-led

Furthermore, 63.2 percent of enterprise work remains fundamentally human-led. This distribution confirms that the introduction of AI does not remove the human operator, but rather elevates them into a critical role of interpretation, oversight, and decision quality. In practice, that elevated role does not distribute evenly. It concentrates in a small number of shared functions that sit underneath everyone else’s work. Teams like DevOps, SRE, QA, model evaluation, Risk, and certain flavours of Operations rarely lead AI roadmaps, yet they are the ones asked to integrate, monitor, and sign off on what other units are building with AI. The same skills of deployment, observability, access control, testing, and policy enforcement are used across many job families, but the actual workload and accountability tend to accumulate in these shared teams.

As a result, AI does not just “augment the individual” in a generic sense. It quietly centralizes AI-mediated work, risk, and decision load into specific parts of the organisation. This is what we call undercurrent pressure: the strain on the connective tissue roles that have become the real control plane for AI (while still being treated as back-office support in most org charts). The strategic implication is that organizations are not deploying a passive tool but are effectively hiring a junior partner that demands supervision. This shift from tool to partner exposes the critical vulnerability in the standard adoption playbook because collaboration requires a foundation of trust that most implementation plans ignore. Omitting this requirement results in companies spending millions on inputs such as licenses and prompt engineering, while the valuable outcomes remain locked behind a barrier of workforce hesitation and structured distrust.

Changing Change Management

In most organizations, “change management” for AI is still scoped as communication, training, and a handful of showcase use cases. That lens made sense when tools were optional productivity enhancers at the edge of the workflow. It is inadequate when the tools sit inside the critical path of how decisions are made, and how work moves. What actually determines success is not whether people have heard of the initiative or attended a webinar, but whether roles, incentives, and decision rights have been updated to reflect the new human-AI split.

For example, if a Sales Manager is still measured on individual activity volume, they will ignore an AI that slows them down in the short term, no matter how “transformational” the slides describe it to be. Likewise, if a DevOps Lead still carries full accountability for uptime without any say in how AI-enabled changes are released, they will naturally pump the brakes on AI adoption. In other words, the limiting factor is not awareness; it is the mismatch between the old performance system and the new way work is supposed to happen.

Reliance on aggregate data obscures the operational realities that determine success. While the portfolio-wide ratio stands at 1.9, this average hides a deep polarization between functional domains. The research dissects the enterprise to reveal that a Software Engineer faces a completely different transformation pressure than a Sales Lead. Software engineering emerges as a scale engine with high technical readiness for agentic workflows where the primary challenge is managing volume. Conversely, functions such as Sales and Account Management appear as stability anchors where the human element remains dominant. For these roles, the introduction of generative AI is less about accelerating production and more about enhancing the quality of high-stakes interpersonal judgments.

This structural divergence reveals exactly where, and why, AI transformation stalls. In high-velocity environments like coding, the friction is technical. In high-judgment environments like sales or strategy, the friction is existential. When an AI pilot meets the realities these human-centric domains, it does not fail because the model hallucinates, or because the interface lags. It fails because it hits a Permission Wall. The organization has not explicitly defined who has the authority to sign off on an AI-generated output, so the default organizational response becomes an indefinite…pause. The pilot works technically, but the process is ghosted politically.

In high-velocity environments like coding, the friction is technical.

In high-judgment environments like sales, the friction is existential.

Don’t Jump The Queue

We can see the same pattern if we stop looking at AI pilots and start looking at task queues. Under the surface of green dashboards and successful proofs of concept, shared functions begin to collect a growing backlog of ambiguous work:

- partial integrations that need hand-holding

- model behaviours that need exception handling

- edge cases that need judgment

- controls that need threading through new workflows

While none of this appears in the headline metrics of “AI Adoption”, it still shows up in the business. Look for longer lead times, more interrupts, and rising cognitive load in exactly those teams that keep the organisation safe and reliable. Undercurrent pressure is what happens when AI accelerates activity at the edges of the organisation, while the middle – the gatekeepers, integrators and evaluators – quietly become the limiting factor without anyone explicitly planning for it. This is the structural origin of the Trust Gap: it is not a sentiment of fear, but a vacuum of authority. Without a clear mechanism to verify and approve the machine’s work, high-performing teams will simply bypass the tool in favor of their own accountability. At least they know how that works.

Analysis of successful implementations across diverse sectors reveals a consistent pattern regarding the human element of transformation. While technical deployment is often measured in days, the behavioral adaptation required to sustain value operates on a much longer timeline. Our review of live production environments confirms that access to a tool does not guarantee adoption. The prevailing assumption that widespread availability coupled with standard training will automatically yield productivity is a clear fallacy.

In reality, the friction of change remains high even when the new technology promises to reduce effort. Employees do not reject these tools because they lack the skill to use them. They reject them because the tools fail to integrate seamlessly into the critical path of their daily responsibilities. Sustainable adoption only occurs when the solution disappears into the workflow itself. Success correlates directly with the deliberate redesign of the operating context rather than the promotional energy behind a launch. When the friction of the old way exceeds the friction of the new way, trust is established through use rather than abuse. Or that is, through utility over rhetoric.

AI Adoption In Reality

Teams adopt AI when three conditions are met:

- the new workflow is clearly defined,

- the ownership of decisions is unambiguous, and

- the surrounding system of measures, reviews, and rewards reinforces the new behaviour.

Where any of those three are missing, adoption quietly stalls. We experiment in sandboxes, but fall back on familiar methods when the stakes are higher. Why? Because the “old way” is still what our managers, KPIs, and audit trails understand. Effective change management for AI looks less like a launch campaign and more like infrastructure work: redesigning workflows, crafting decision gates, and setting performance indicators so that the safest, easiest way to succeed is to use the new system. Route around it to how things used to work, get friction.

Though AI has changed many things in the world around us, some things remain painfully the same: buying licenses and running pilots still don’t count as progress. We introduce the 1.9 Augmentation Ratio from the TechWolf data here to prove that AI is a partner, at least in many cases. Since we cannot partner without trust, the current strategy of buying tools without building trust infrastructure is a misallocation of capital.

Losing Trust in the Age of AI

We all know what it’s like to build trust over time with human coworkers, and for most of us it happens naturally. Then there are those cases where we lose trust, and have to work to rebuild it. This is happening more often now, especially as we learn to work with AI.

Perhaps you’ve received some work product from someone that still had AI annotations or flat out errors in it that obviously had not been reviewed for even 2-seconds, but was still presented to you as “done.” Or worse yet, perhaps you were the one that accidentally handed something like this off to someone else, and felt embarrassed when your haste and lack of validation was called out.

We can make policies and re-engineer workflows to deal with these more obvious cases of trust breaches, and we should. But these are not the only ways that trust fails in the Age of AI. Here’s a story of another failure path:

A few months ago, one of the authors was working for a client and one of their trusted university partners. We were adapting some of their existing eLearning content, and in a previous meeting they’d asked how we were using AI and let us know about internal AI tooling discussions they were having. They sounded very well informed, like they were already at the leading edge of their academic environment.

Our usual process for this workflow is to mock up an example to help focus the discussion, and in this case, we did so using AI. I took a few screenshots of their existing created-by-a-human-artist artwork and asked AI to render some new images in the same style to use as placeholders in our mockup, which I presented in our next meeting.

What happened next was so unexpected I didn’t even catch it at first. As I ran through our sample course, the university partners fell unusually silent. Then, they exploded with a mix of panicked questions and pointed language:

- “Where did you get these image

- “Why are we changing process, I don’t understand…who decided this?”

- “We an’t use this! Isn’t this illegal? It’s clearly unethical.”

- “Do we have to go pay our artist now? We didn’t budget for this.”

- “You have put us in a very difficult situation here.”

From our perspective, we were doing the same thing we’d always done: presenting a mockup of content that would need to go through the same SME & Peer Review checkpoints, be vetted against the same instructional rubrics, and run the same process we’d been using for years. But to the partner, because the product looked finished, they thought it was finished. Our words seemed to contradict our actions. They saw the polish previously only available in the final approval stage, and assumed we’d jumped all the way to the final approval stage. Worse yet, they thought we’d corrupted their work by sharing it with AI without their express consent. They were hurt and scared and shocked by what we’d done. In a routine 30-minute check-in on a Tuesday, years of working trust evaporated.

Because the product looked finished, they thought it was finished.

Gaining Trust in the Age of AI

For damage control, our team reconvened later that day. We resolved to double down, and treat this as an educational opportunity for both sides. We knew we needed to support their evolution and understanding of AI and process. They wanted this, they just didn’t know what it meant, or how to navigate it. We’d have to slow down to human speeds, and add some more padding in the delivery timeline for this project.

Over the course of the next few months, we scheduled more time for discussion. We went back to the 100% human workflow, and asked on an asset by asset basis for permission to try a specific experiment with AI. We shared the results, we showed them how we were doing what we were doing, and shared the in-progress governance policies we were working with. We got them curious, and excited to help steer the process again. We made it clear that their subject matter expertise and context is only more important, not less. We helped them see that deliverables can be higher-touch and higher-speed at the same time, and that higher levels of decisions can result in higher quality work than we’ve been able to achieve before.

This project came in on-budget, but overtime. We were willing to pay this cost to rebuild trust. The next project came in well under budget, but in record time. We’ve been able to accelerate project timelines for all planned collaborations in 2026. Most importantly, they are now they are our biggest advocate in the entire 100+ university partner system. Their voice is helping attract more of exactly the kinds of partners we’re looking for, who are hungry to learn more about how they too can do more with less that is faster and higher-quality and higher-control. We’re happy to show them what we know.

One of the main things we’ve learned ourselves is how workflows change. Validation, typically something we do initially for scope then bring back at the end of any workflow for signoff, instead becomes a constant. When working with AI, or anyone else who is using AI (which is now safe to assume as a default for most anyone), checking is something inherent in every step and substep. Realignment to the scoped spec is persistent at every stage gate, and that initial spec is everything.

How Any Innovation Spreads (Or Doesn’t)

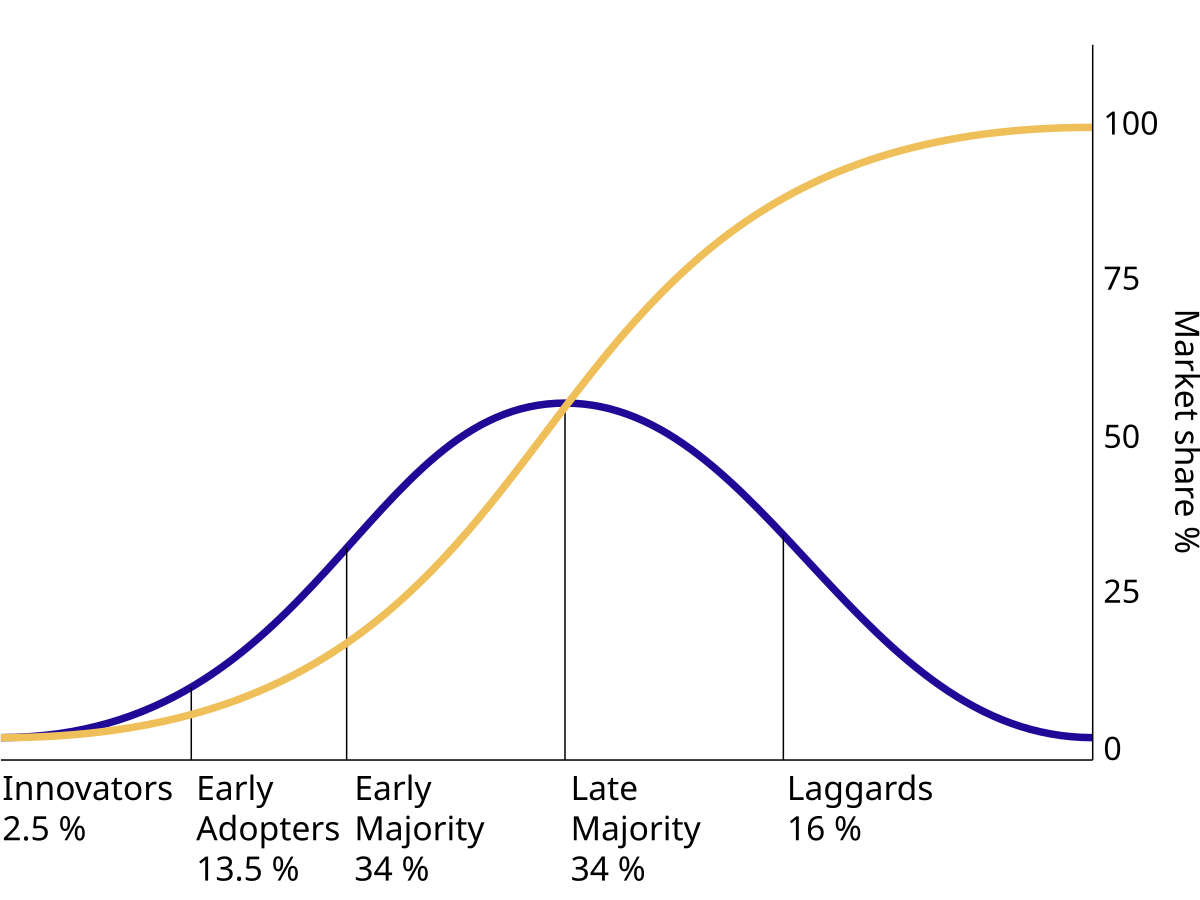

You may be familiar with the Diffusion of Innovation curve. Ev Rogers mapped how innovations spread over 60 years ago. Then in the 1990s, Geoff Moore showed us where they stall: The Chasm between Early Adopters and the Early Majority. AI is currently sitting right in that chasm where adoption usually breaks. It is the job of anyone creating change to bridge that gap now.

What hasn’t changed over the last half century of innovations, from personal computers to the internet to mobile devices to social media to AI is clear: aiming to change everybody at once is a recipe for failure. “Everybody” doesn’t need to do anything. Instead, we simply need to get the early adopters and the catalysts to get along, and to trust each other, then the entire curve connects and flows. This is how innovations diffuse through society, and historically it’s the only way they ever have.

The Yes Problem

The takeaway is simple: we need to stop asking “which tool?” and start asking “which teams and which workflows?” The right answers are as unique and trust-based as the interplay between the teams and workflows themselves. This is another manifestation of that same undercurrent pressure, a way to work with it rather than against it.

The worst choice is to ignore it. When leaders cannot point to where the undercurrent pressure lives in their organisation, then they’ve blinded themselves to where things break first. Taken together, this is why we pay close attention to undercurrent pressure: the strain in the shared functions that quietly carries everyone else’s AI experiments while the organisation still believes the hard part is “change communications” rather than redesigning how work and decisions actually flow.

Once the misallocation can be seen clearly, “which AI tool should we buy next?” sounds woefully magical. It shifts to the much simpler and much more awkward human question: “when an AI system recommends something that matters, where does a safe yes actually live in this organisation?”

When AI suggests a pricing change, a contract clause, an ops adjustment or a customer response, who is allowed to approve it? What evidence would you need to feel comfortable putting your own name behind that decision? And how many of those decisions could you or your team handle before becoming a bottleneck for everyone else?

Most organisations do not have explicit answers to such questions. They assume that smart people will just “work it out” like they usually do, that pilots will somehow grow into production projects, and that good intentions will bridge the gap between experiment and accountability. In practice, that vagueness is precisely what creates the Permission Wall. When nobody quite knows who owns the approval, the safest move is to neither to approve or disapprove but to stall, route around the new system, and quietly revert to doing things the more familiar way.

Yes, we can buy more tools. Yes, we can launch more pilots. Yes, of course we can always generate more activity. But none of that moves the underlying reality. Until we can point at a process and a person and say “this is how a machine recommendation becomes a decision we stand behind” then we have not yet engineered AI into our organisation. All we have accomplished is moving the bottleneck just out of sight.

Up Next

In part two of this three-article series, we’ll look deeper into solutions to The Yes Problem, and why scaling AI requires a new approach to governance.

This article is entirely written by humans, without AI assistance. If you’ve enjoyed it, please let us know! Your input shapes our future work. Spelling is a mix of British English and American English, if you’ve found a typo or other human error within these words, also kindly inform us.

For more on Markus Bernhardt and Endeavor Intelligence, visit EndeavorIntel.com. There you can download your free copy of The Endeavor Report™ and other cutting edge AI research.

For more from Sam Rogers and Snap Synapse, sign up for our Signals & Subtractions newsletter to get new insights every Monday on moving from AI promise to AI practice.